The digital landscape is rapidly evolving, with artificial intelligence leading the charge. As powerful AI tools like ChatGPT become integrated into our daily routines, a crucial question emerges: what is the environmental and economic cost of this technological revolution? We often take the speed and accessibility of modern search engines for granted, but the energy demands behind these systems vary dramatically.

For decades, Google Search has epitomized efficiency, handling billions of queries daily with remarkable optimization. These systems have been refined over countless iterations, making each individual search request incredibly energy-efficient. Now, with the advent of generative AI, the comparison shifts dramatically, revealing a potential “AI Margin Trap” that demands our attention.

The Hidden Energy Cost of AI

Think about a typical Google search: it’s a finely tuned machine, delivering relevant results almost instantly. This process consumes a minimal amount of energy per query, largely thanks to highly optimized algorithms and massive, efficient data centers. This decades-long refinement has led to an incredibly low energy footprint for traditional search interactions.

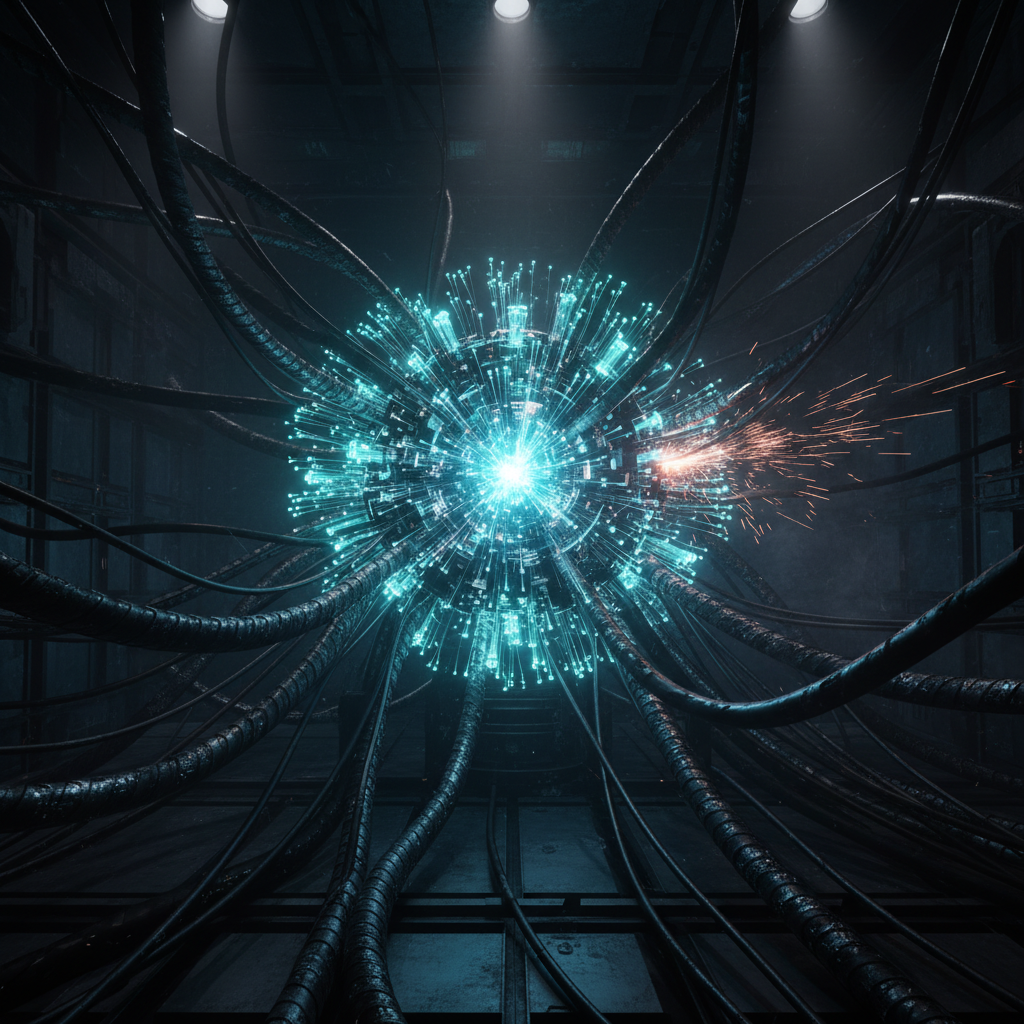

However, the new era of conversational AI, powered by large language models (LLMs) like those behind ChatGPT, operates on a fundamentally different scale. These models are vast, containing billions of parameters and requiring immense computational power for both training and inference. Generating a unique, human-like response from an LLM involves far more complex calculations than retrieving indexed web pages.

Each interaction with an AI chatbot can demand significantly more energy. This is primarily due to the extensive use of graphics processing units (GPUs) and the continuous processing required to generate natural language outputs. Where traditional search is like a quick lookup in a meticulously organized library, AI generation is akin to having a supercomputer write a custom essay for you on the fly.

Understanding the “AI Margin Trap”

The “AI Margin Trap” highlights the critical disparity in energy consumption and its potential financial implications. If AI-powered search, which is inherently more energy-intensive per query, were to replace or even substantially augment traditional search at scale, the global energy demand could skyrocket. This isn’t just an environmental concern; it poses a significant economic challenge for tech companies.

Imagine the energy bill for processing billions of AI-generated responses daily instead of billions of traditional search results. The operational costs for companies like Google or Microsoft, already managing immense infrastructure, would surge dramatically. This increase in energy expenditure could erode profit margins, making widespread AI integration less sustainable financially.

Moreover, the environmental footprint would become substantial, potentially leading to increased carbon emissions from power generation. Data centers consume vast amounts of electricity, and the additional burden from ubiquitous AI could strain existing grids and accelerate climate concerns. It’s a classic example of technological advancement clashing with real-world resource constraints.

This trap isn’t just hypothetical; it’s a real consideration for tech giants investing heavily in AI. They must balance the demand for cutting-edge AI features with the practicalities of energy supply and cost. The path to truly scalable AI hinges on finding solutions that significantly reduce its energy footprint without compromising performance.

Paving the Way for Sustainable AI

Addressing the “AI Margin Trap” requires a multi-faceted approach, focusing on innovation in hardware, software, and operational strategies. The good news is that researchers and engineers are actively working on making AI more efficient. This includes developing more energy-efficient AI models and specialized hardware tailored for AI workloads.

Here are some key areas of focus:

- Model Optimization: Developing smaller, more efficient LLMs that can perform similar tasks with fewer parameters and less computational power. Techniques like quantization and pruning are crucial here.

- Hardware Innovation: Designing new AI accelerators and specialized chips that are optimized for AI computations, consuming less energy than general-purpose GPUs. This includes novel chip architectures and cooling solutions.

- Algorithmic Efficiency: Creating smarter algorithms that require fewer steps or less data to achieve desired AI outcomes, reducing the overall processing load. This is a constant area of research in machine learning.

- Renewable Energy Integration: Powering data centers with 100% renewable energy sources to offset the carbon footprint, even as energy consumption rises. Many tech companies are already committed to this goal.

The integration of AI into search and other critical services is inevitable and exciting. However, it’s paramount that this progress is pursued responsibly, with a clear understanding of its energy implications. The goal is to avoid falling deeper into the AI Margin Trap by proactively investing in efficiency and sustainability.

Ultimately, the challenge of the “AI Margin Trap” underscores the need for conscious design and deployment of artificial intelligence. By prioritizing energy efficiency alongside performance, we can ensure that AI continues to advance in a way that is both innovative and environmentally and economically sustainable. The future of AI depends not just on what it can do, but on how efficiently it can do it.

Source: Google News – AI Search