The world of wearable technology is heating up, and nowhere is this more apparent than in the burgeoning smart eyewear market. For years, the concept of glasses that do more than just correct vision has fascinated us, often appearing in science fiction. Now, with technology rapidly advancing, two tech titans—Google and Meta—are setting the stage for what looks like smart eyewear’s first true showdown.

Google’s re-entry into this space with its rumored AI-powered glasses is poised to challenge Meta’s already popular Ray-Ban Smart Glasses. This head-to-head battle isn’t just about product features; it’s about defining the future of how we interact with digital information in the physical world. Let’s delve into what each company brings to the table and what this competition means for consumers.

Meta Ray-Ban: Fashion Meets Function

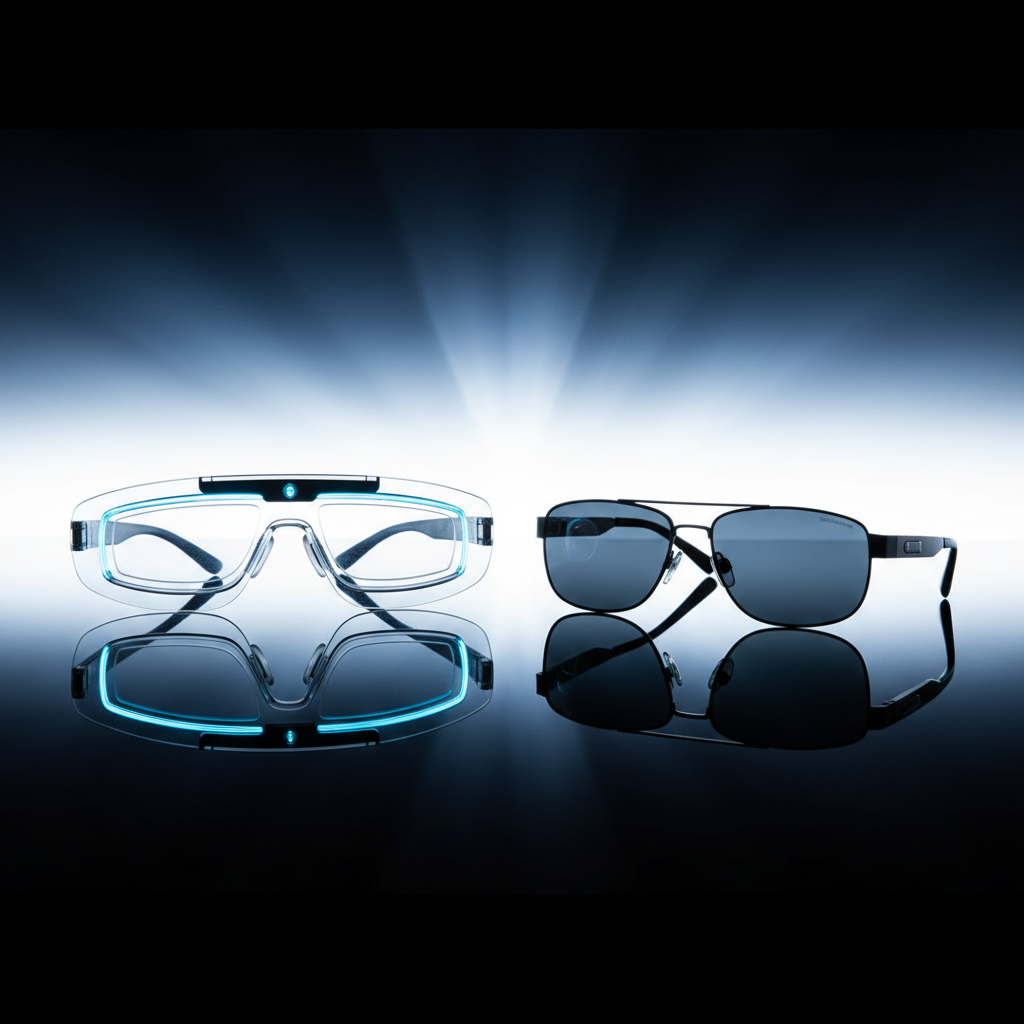

Meta, in collaboration with eyewear giant EssilorLuxottica, launched the Ray-Ban Smart Glasses, building on the foundation of their earlier “Stories” model. These glasses are designed with discretion and style in mind, looking remarkably like classic Ray-Ban frames. They prioritize a seamless blend of everyday wearability with smart capabilities, making them a fashion-forward choice for early adopters.

The primary features of the Meta Ray-Ban Smart Glasses revolve around capturing and sharing moments effortlessly. Users can take photos and videos hands-free, listen to music or podcasts via open-ear speakers, and even make calls. Recent updates have significantly enhanced these glasses with Meta AI integration, allowing for voice commands to identify objects, translate languages, and provide real-time information, transforming them into a genuinely intelligent assistant.

- Discreet Design: Aesthetically pleasing and indistinguishable from regular Ray-Ban frames.

- Hands-Free Capture: Effortlessly snap photos and record videos of your daily life.

- Integrated Audio: Open-ear speakers for music, podcasts, and calls without blocking ambient sound.

- Meta AI: Voice-activated assistant for real-time information, translation, and more.

- Social Sharing: Seamless integration with Meta’s platforms for sharing content.

Google’s AI Glasses: A New Vision for Augmented Reality

Google has a history with smart glasses, famously launching Google Glass over a decade ago, which ultimately struggled with privacy concerns and mainstream adoption. However, the company has not given up on the vision of smart eyewear, and its upcoming “AI Glasses”—often referred to as Project Iris—represent a significant leap forward. This new generation aims to leverage advancements in AI and augmented reality (AR) to create a much more immersive and useful experience.

While official details are scarce, leaks and patents suggest Google’s AI Glasses will focus heavily on advanced AR capabilities, projecting digital information directly into the user’s field of view. This could range from real-time navigation overlays to instant translation of signs or conversations, all powered by sophisticated on-device AI. Unlike Meta’s social-first approach, Google appears to be targeting a more utilitarian and informational role, enhancing daily life with context-aware data.

Initial prototypes indicate a more technologically advanced, though potentially less discreet, design compared to Meta’s offering. The focus here is on robust functionality, pushing the boundaries of what augmented reality can achieve in a wearable form factor. Google’s rich ecosystem of services, from Search to Maps to Translate, positions them uniquely to integrate deep AI intelligence into these glasses, offering unparalleled contextual awareness.

The Battle of Philosophies: Social vs. AI Utility

The competition between Google and Meta isn’t merely about who builds the better pair of smart glasses; it’s about fundamentally different philosophies for how this technology should integrate into our lives. Meta’s approach with Ray-Ban Smart Glasses is about enhancing social connection and casual content creation, prioritizing fashion and ease of use in familiar form factors. It’s about being present while still capturing moments and accessing information discreetly.

Google, on the other hand, seems to be charting a course for deep utility and enhanced perception through augmented reality and AI. Their vision appears to be about augmenting human intelligence and capabilities, providing a powerful informational layer over the physical world. This difference in core purpose will likely attract distinct user bases, even as the technologies inevitably converge over time.

What This Means for the Future of Wearable Tech

This escalating rivalry between two tech giants is a strong indicator that smart eyewear is finally maturing beyond experimental prototypes into viable consumer products. Their significant investments validate the potential of glasses to become the next major computing platform, succeeding smartphones. This competition will accelerate innovation, improve design, and ultimately drive down costs, making smart glasses more accessible to a broader audience.

As Google and Meta push the boundaries, we can expect to see advancements in battery life, display technology, and miniaturization of powerful AI chips. The “first real fight” in smart eyewear is not just a battle for market share; it’s a critical step towards realizing the long-held promise of ubiquitous computing that truly integrates with our perception of the world.

Source: Google News – AI Search